Due to the widespread availability of high-speed Internet, the level of expectation of the users with respect to the performance of web-based software systems has changed dramatically. For instance, about 40% of the customers will abandon a web application if the response time is greater than 3 seconds. Furthermore, Amazon, a leading online retailer, reports that 100 milliseconds (ms) additional delay in response time could cost them 1% drop in sales. Therefore, ensuring the reliability and efficiency of a software system is imperative for such companies and the overall success of the software projects.

In general, two-thirds of the performance bottlenecks are triggered only on specific input values. However, finding those input values for performance test cases that can identify performance bottlenecks in a large-scale complex system within a reasonable amount of time is a notoriously difficult and expensive task. The reason is that there can be numerous combinations of test input values and executing one such combination against the SUT (system-under-test) can take a considerable amount of time. Thus, it is almost impossible for test engineers to test exhaustively every possible combination. The problem becomes even more challenging when the SUT is a black-box. This means that we cannot inspect the internal system dynamics. The only way to interact with the SUT and monitor different key performance indicators (KPIs) of the SUT is through public interfaces and external observations during the execution of the SUT, respectively.

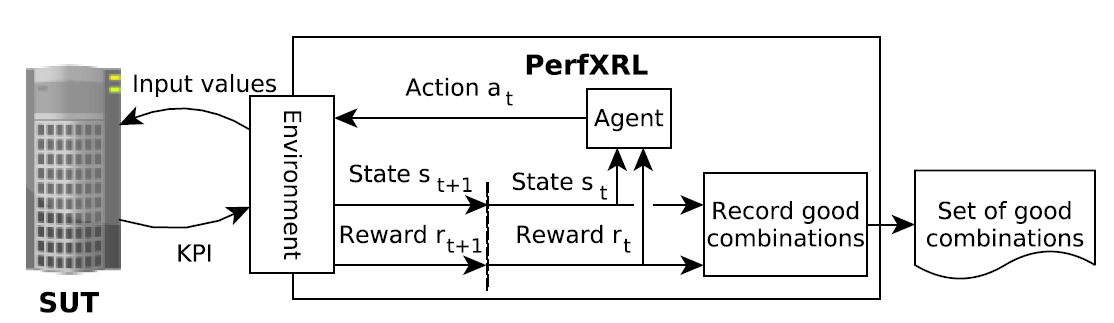

In order to address the above issues, we formulate the test data generation problem for performance test cases as a reinforcement learning problem. Reinforcement learning (RL) is a class of machine learning techniques where an agent learns to interact with an unknown environment in order to accomplish a given goal through a trial and error process

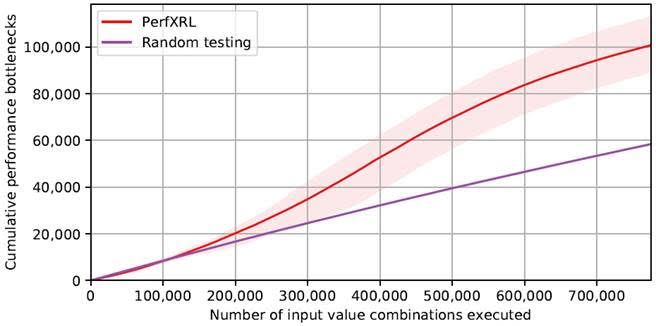

To that extent, we present an approach for Performance EXploration using RL (PerfXRL) to explore a large space of combinations of the input parameter values in order to find performance bottlenecks (denoted as good combinations) in a black-box system without any prior domain knowledge. Our approach uses Dueling Deep Q-Network (DDQN), which is an RL technique. In addition, we develop tool support for our approach in Python and we empirically evaluate our approach and show that PerfXRL is able to detect 72% more performance bottlenecks than random testing.

More details can be found in Ahmad, Tanwir and Ashraf, Adnan and Truscan, Dragos and Porres, Ivan Exploratory Performance Testing Using Reinforcement Learning. In Euromicro Conference on Software Engineering and Advanced Applications (SEAA 2019), Kallithea, Chalkidiki, Greece. August 28 – 30, 2019 (author version)