Embedded systems normally execute applications with both functional and non-functional constraints, and the underlying hardware and software components can be very complex and heterogeneous. Different techniques, at different levels of abstractions, are used to manage the complexity of developing such systems, such as those related to model-based engineering, model-based testing, HW-in-the-loop, support on target programming, etc.

Along the development flow, there is a point where an actual target (i.e. not virtual) must be programmed: if the target is composed of multiple “isles” of computational elements, namely a heterogeneous multi-core, some effort on parallelizing the application can be required to the programmer. In this context, different supporting libraries exist. Among them, one of the most famous is OpenMP, which supports designers on parallelizing applications written in C/C++ and Fortran code, running on shared memory platforms.

OpenMP is an implicit parallelization technique: this means that, in order to split the work done in a task among multiple threads of execution, it is not required the explicit assignment of the work to each thread (explicit parallelism). This parallelization can be partially guided by using different OpenMP clauses, that allow addressing the work that a thread should perform, the scheduling type, the atomicity of some operations, etc.

Implicit parallelism involved within OpenMP has two opposite faces: it is simple to apply, as the code of a serial program can be parallelized just by exploiting some directives, while, on the other hand, in the implicit parallelism it is hard to control all the factors involved in performance issues, such as memory accesses, caches behaviour and thread mutual exclusions. This becomes particularly true in the case of limited resources, as in embedded systems.

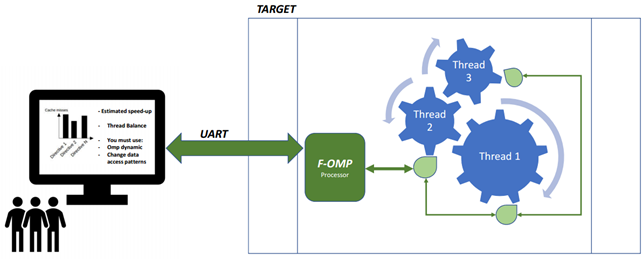

To solve this, F-OMP proposes a mechanism that provides feedback on how to use OpenMP and guide the programmers on the proper use of its directives, as shown in the figure above. In particular, it proposes a monitoring infrastructure, drawn in green, to be used in an embedded target developed on FPGA (at least in the prototypal phase). It transforms data collected at run-time into useful information by means of proper metrics (estimated speed-up, load balancing, and false sharing) without inserting software overhead. The last point is particularly important in embedded systems design, as the minimal perturbation can change the behavior of the whole system. In order to face with this problem, F-OMP makes use of a hardware monitoring system that requires additional on-chip area but does not affect execution time.

We have implemented F-OMP on a Zynq7000 SoC, with a dual-core ARM processor as the master processing element and four-core Leon3 SMP as an isle of computational elements. Such an isle executes a Linux operating system, which provides support for OpenMP applications.

F-OMP has been presented at DAC 2017 conference, and a video introduction is available at the following link: